top of page

Edge Computing

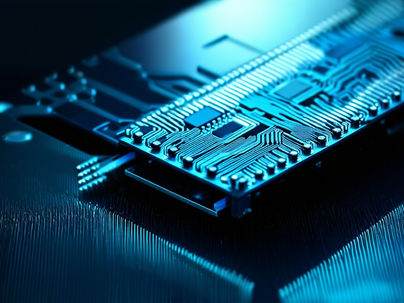

Tensor Parallelism: Distributing Individual Layers Across GPUs

Tensor parallelism distributes individual neural network layers across multiple graphics processing units by splitting weight matrices and activations along specific dimensions to enable concurrent computation. This methodology allows a single layer, which would otherwise exceed the memory capacity of a single device, to be partitioned such that each processor holds a distinct shard of the parameters. The core operation involves a matrix multiplication where the input tensor

Yatin Taneja

Mar 916 min read

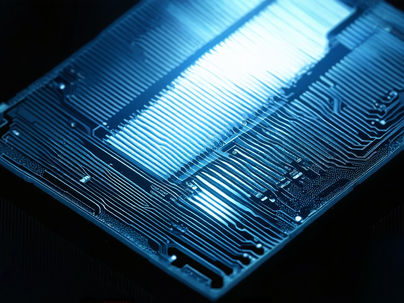

Photonic Neural Networks: Computing with Light

Photonic neural networks utilize photons instead of electrons to execute neural network computations, fundamentally changing the physical medium through which information flows during processing. This substitution enables high-speed processing with minimal energy consumption because photons, being massless bosons, do not experience resistive heating or capacitive charging delays in the same manner as electrons moving through metallic interconnects. The core concept involves r

Yatin Taneja

Mar 98 min read

Cloud vs. Edge: Where Will Superintelligence Actually Reside?

Cloud computing architectures centralize processing tasks within remote data centers to provide access to extensive computational resources and scalable storage solutions while facilitating simplified software update mechanisms across distributed user bases. This centralization necessitates data transmission over geographical distances, which introduces latency, typically ranging from 10 milliseconds to over 100 milliseconds depending on the physical distance between the clie

Yatin Taneja

Mar 98 min read

Hypernetworks: Networks That Generate Other Networks

Hypernetworks operate as a distinct class of neural architectures designed explicitly to synthesize the weight parameters for a separate target network, thereby establishing a functional hierarchy where the primary output of one system constitutes the core operational logic of another. This architectural method fundamentally redirects the objective of machine learning from the static optimization of a fixed set of parameters toward the dynamic synthesis of task-specific model

Yatin Taneja

Mar 99 min read

Photonic Computing: Light-Speed Neural Computation

Photonic computing utilizes photons instead of electrons for data processing to achieve high bandwidth and low latency by using the core physical properties of light to transmit and manipulate information. Traditional electronic processors rely on the movement of charge carriers through conductive materials, a process that inherently generates resistive heat and faces significant signal attenuation at high frequencies due to the skin effect and capacitive coupling between int

Yatin Taneja

Mar 916 min read

Edge Deployment: Running Superintelligence on Devices

Edge deployment involves executing advanced AI models directly on end-user hardware like smartphones and embedded systems instead of relying on remote cloud servers to process data and generate inferences. This method reduces latency and enhances privacy by keeping data local while enabling functionality in low-connectivity environments where network access is intermittent or entirely unavailable. The primary challenge involves adapting computationally intensive models design

Yatin Taneja

Mar 910 min read

Edge AI Accelerators: Efficient Inference on Devices

Edge AI accelerators enable on-device inference by processing neural network computations locally, independent of cloud connectivity, ensuring that devices can execute sophisticated machine learning tasks without relying on remote servers. These accelerators are specialized hardware units designed to execute deep learning models with high efficiency, low latency, and minimal power consumption, addressing the stringent constraints of portable and embedded systems. Primary use

Yatin Taneja

Mar 99 min read

Wisdom of the Edge: Learning from the Fringes

Studies in early 20th-century anthropology and sociology documented knowledge generation at cultural and intellectual peripheries, observing that groups situated away from centers of power often develop distinct practices and beliefs that later influence the mainstream. Post-WWII innovation hubs such as Bell Labs and Xerox PARC operated deliberately outside mainstream corporate structures to promote disruptive ideas, creating physical and intellectual spaces where researchers

Yatin Taneja

Mar 98 min read

Edge AI

Edge AI refers to the deployment of artificial intelligence algorithms directly on local hardware devices, ensuring that data processing occurs physically close to where the data is generated rather than relying on centralized cloud servers. This architectural framework shift allows on-device inference to enable immediate data processing at the source, which includes smartphones, wearable technology, environmental sensors, or embedded industrial systems. By keeping the data o

Yatin Taneja

Mar 99 min read

Optical Interconnects: Photonic Communication for AI Clusters

Electrical interconnects based on copper transmission lines encounter severe physical limitations as data rates increase and cluster sizes expand toward exascale performance levels. The resistance of copper conductors rises significantly at high frequencies due to the skin effect, which confines current flow to a thin outer layer of the conductor, thereby increasing effective resistance and signal attenuation. Dielectric losses within the insulating materials surrounding the

Yatin Taneja

Mar 911 min read

bottom of page