Cooling Challenge: Thermal Management for Superintelligent Systems

- Yatin Taneja

- Mar 9

- 12 min read

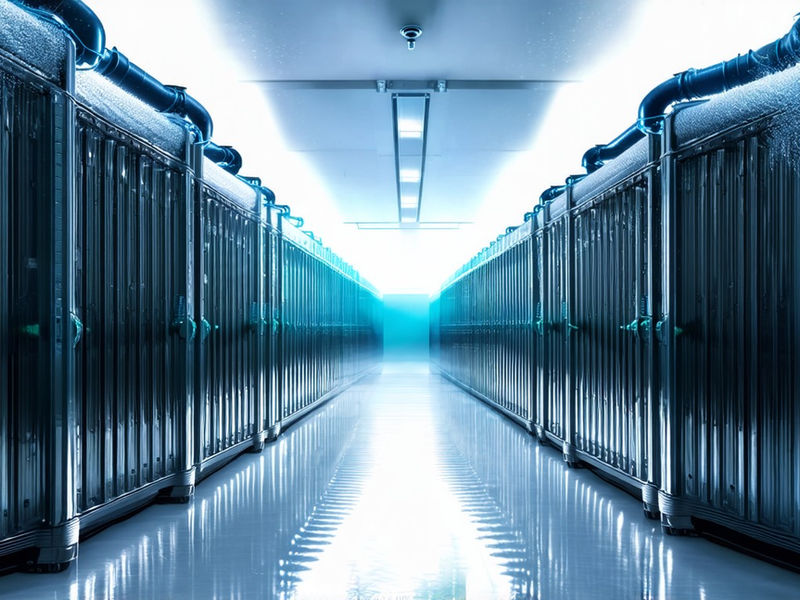

Superintelligent systems will generate heat densities that exceed the removal capacity of conventional thermal management methods because the core physics of information processing dictates that irreversible logic operations dissipate energy as entropy, making it real thermally within the substrate. Extreme compute density creates thermal loads capable of causing immediate hardware failure without effective dissipation, as the aggregation of billions of transistors switching at gigahertz frequencies within a minimal footprint results in localized hotspots capable of surpassing the melting points of semiconductor materials in milliseconds. Heat accumulation leads to thermal throttling and reduced component lifespan in current high-performance clusters, necessitating a change of thermal architecture where heat removal is treated not as an auxiliary service but as a primary determinant of system performance and reliability. The relentless pursuit of greater computational power has pushed the boundaries of power densities well beyond what standard cooling solutions can handle, creating a scenario where the thermal envelope becomes the limiting factor for scaling intelligence. Traditional air cooling relies on airflow over heat sinks and is limited by the low thermal conductivity of air, which acts as a poor medium for transferring large quantities of thermal energy away from high-power density sources due to its low specific heat capacity and density. Air cooling becomes impractical at chip power densities above approximately 100 W/cm² due to insufficient heat transfer rates, a threshold that modern high-performance accelerators and specialized AI processors routinely surpass during peak utilization cycles.

The specific heat capacity of air is significantly lower than that of liquid media, requiring massive volumes of air to be moved at high velocities to achieve meaningful cooling, which in turn introduces significant acoustic noise and mechanical vibration that can destabilize sensitive equipment. As transistor geometries shrink and clock speeds increase, the surface area available for heat dissipation decreases while the power density rises, creating a mismatch that renders air-based solutions fundamentally inadequate for the demands of superintelligent hardware architectures. Liquid cooling introduces fluids with higher thermal conductivity to absorb and transport heat more effectively than air, using the superior physical properties of liquids to achieve orders of magnitude improvement in heat transfer coefficients compared to gaseous media. Direct-to-chip liquid cooling routes coolant through cold plates attached to processors to enable higher heat flux removal, placing the thermal interface in direct proximity to the heat-generating die to minimize thermal resistance across the interface materials. This method allows for the maintenance of lower junction temperatures even under sustained heavy loads, thereby preserving the integrity of the silicon and ensuring consistent computational output without the performance penalties associated with thermal throttling mechanisms found in air-cooled systems. The implementation of direct liquid cooling is a necessary evolution in data center infrastructure, shifting the thermal management framework from convective cooling of the entire environment to conductive extraction of heat at the source.

Two-phase liquid cooling applies phase change to exploit the high latent heat of vaporization for improved efficiency, utilizing the thermodynamic principle that a fluid absorbs substantial energy during the transition from liquid to gas without a corresponding rise in temperature. Boiling occurs at the chip surface in two-phase systems to carry away large amounts of energy with minimal temperature rise, creating a highly efficient heat transfer mechanism that can handle the erratic and intense thermal spikes characteristic of advanced neural network inference and training workloads. The management of two-phase flow presents complex engineering challenges, including the prevention of dry-out conditions where the liquid film evaporates completely and leads to a catastrophic spike in surface temperature, necessitating precise control over pressure and flow rates within the cooling loops. Advanced cold plate designs incorporate micro-structures to enhance nucleation sites and promote uniform boiling, ensuring that the phase change process remains stable across the entire surface of the chip. Immersion cooling submerges entire server racks in non-conductive dielectric fluid to allow direct contact with components, eliminating the thermal interface materials and air gaps intrinsic in traditional cooling setups that impede efficient heat transfer. Single-phase immersion uses circulated liquid without phase change, while two-phase immersion employs fluids that boil at low temperatures, offering distinct advantages depending on the specific thermal requirements and complexity tolerance of the hardware being cooled.

By immersing the electronic components directly in the coolant, the system achieves uniformity in temperature distribution, reducing the thermal stress on solder joints and interconnects that often leads to mechanical fatigue and failure over time due to repeated expansion and contraction cycles. The dielectric nature of the fluids used in immersion cooling ensures electrical safety while providing a medium that can absorb heat orders of magnitude faster than air, enabling extreme power densities that would be impossible to achieve with conventional methods. Cryogenic cooling will reduce chip temperatures to near-liquid nitrogen levels of 77 K to lower electrical resistance, exploiting the physical phenomenon where the resistivity of metals and semiconductors drops significantly at cryogenic temperatures due to reduced phonon scattering. Lower temperatures enable faster switching speeds and reduced active power consumption in future superintelligent hardware, as the mobility of charge carriers increases within the crystal lattice of the semiconductor material, allowing for higher current flow with less energy loss. Cryogenic systems require vacuum-insulated plumbing and specialized materials resistant to thermal contraction, as the differential shrinkage between dissimilar materials at such low temperatures can cause mechanical fractures and seal failures if not meticulously engineered with compatible coefficients of thermal expansion. The deployment of cryogenic cooling introduces significant complexity in terms of infrastructure and maintenance, yet it offers a pathway to break through the performance barriers imposed by room-temperature operation.

3D chip stacking increases computational density yet traps heat in internal layers due to poor vertical thermal conductivity, creating a situation where the dies stacked furthest from the heat sink operate at significantly higher temperatures than those on the top layer closest to the cooling solution. Heat trapped in stacked dies raises junction temperatures and increases leakage currents to undermine performance gains, as the exponential relationship between temperature and leakage current negates the power savings expected from shorter interconnects and reduced logic depth achieved through vertical connection. Effective interlayer cooling is necessary to prevent 3D setup from reaching a thermal ceiling below theoretical limits, requiring the setup of micro-fluidic channels directly within the silicon stack or the use of thermal through-silicon vias that are improved for heat transport rather than electrical signaling. The challenge of removing heat from the core of a 3D stack is one of the most significant hurdles in the continued scaling of computational architectures. Solving thermal constraints is a co-equal requirement with chip design for achieving scalable superintelligent hardware, mandating a holistic approach where the thermal characteristics of the system influence the logical architecture and vice versa from the earliest stages of development. Current high-performance computing clusters increasingly adopt direct liquid cooling for GPUs and accelerators, reflecting a recognition within the industry that air-based cooling has reached the limits of its flexibility regarding power density and energy efficiency.

Meta, Google, and NVIDIA have deployed liquid-cooled AI infrastructure with reported PUE improvements of 10–20%, demonstrating that advanced thermal management yields tangible benefits in terms of energy efficiency and operational cost reduction while enabling higher sustained performance levels. These deployments serve as proof-of-concept for larger-scale implementations, validating the engineering models that predict the necessity of liquid-based solutions for the next generation of computing systems designed for artificial general intelligence. Immersion cooling is currently used in niche applications such as cryptocurrency mining and edge AI deployments, where the high power density and harsh environments make traditional cooling methods impractical or inefficient due to dust ingress and limited maintenance access. Two-phase immersion systems demonstrate heat removal capacities exceeding 2,000 W per chip in advanced laboratory settings, suggesting that the technology holds promise for future superintelligent systems that may dissipate power at similar or greater magnitudes per unit area. Dominant architectures favor hybrid approaches using air for low-power components and direct liquid cooling for high-TDP processors, allowing data center operators to balance the capital expenditure of advanced cooling infrastructure against the performance requirements of specific workloads without committing to a complete facility retrofit. This stratification of cooling strategies enables a gradual transition toward full liquid adoption, permitting the setup of new high-density hardware into existing environments with minimal disruption.

Integrated microchannel coolers fabricated directly into silicon substrates will enable sub-millimeter proximity between heat sources and coolant, drastically reducing the thermal resistance between the transistor junctions and the cooling fluid by eliminating interface materials entirely. The fabrication of these microchannels involves advanced etching and bonding techniques such as deep reactive ion etching to create a network of microscopic passages capable of handling high-pressure coolant flow directly within the die structure without compromising structural integrity. This level of connection is the ultimate convergence of manufacturing and thermal engineering, effectively turning the silicon substrate itself into a highly efficient heat exchanger capable of handling fluxes previously thought impossible for conventional materials. The implementation of integrated microchannels requires careful consideration of structural mechanics and fluid dynamics, as the pressure exerted by the coolant within the microscopic channels must be contained without causing die fracture or delamination. Supply chains for advanced coolants depend on a limited number of chemical manufacturers primarily in the United States, Japan, and Western Europe, creating a concentrated market structure that is vulnerable to disruptions caused by geopolitical instability or natural disasters. Rare earth elements and high-purity copper are required for efficient heat exchangers and create material constraints under scaling pressure, as the demand for these materials outstrips the current capacity of mining and refining operations globally.

Major players include Vertiv, Schneider Electric, and CoolIT Systems in the liquid cooling market, providing the specialized infrastructure necessary to implement these complex thermal management solutions for large workloads while maintaining reliability standards required by hyperscale data centers. The reliance on specific geographic regions for critical materials introduces an element of risk into the deployment timeline of superintelligent systems, necessitating the development of alternative materials or the diversification of supply sources to ensure continuity of production during periods of high demand. 3M and Solvay dominate the production of dielectric fluids necessary for immersion cooling, holding proprietary formulations that offer the precise balance of thermal stability, dielectric strength, viscosity, and environmental safety required for data center use. Startups such as LiquidStack and Submer specialize in immersion cooling solutions tailored for high-density AI workloads, driving innovation in tank design and fluid management systems that improve the efficiency of the immersion process through advanced separation techniques and heat exchange geometries. Geopolitical tensions affect access to critical materials as manufacturing regions expand domestic semiconductor and cooling infrastructure, leading to trade policies that may restrict the flow of essential components across borders to protect local industries or national security interests. Export controls on high-end thermal management components could delay the deployment of superintelligent systems in certain regions, highlighting the strategic importance of thermal technology in the global technological domain alongside compute and memory resources.

Academic research focuses on nanofluid coolants and electrohydrodynamic pumping to enhance passive and active cooling, exploring methods to increase the thermal conductivity of base fluids by suspending nanoparticles or manipulating fluid flow through electric fields without moving mechanical parts. Industrial labs at IBM, Intel, and Samsung collaborate with universities on the co-design of chips and thermal solutions, recognizing that breakthroughs in thermal management require a cross-disciplinary effort that combines theoretical physics with practical engineering constraints found in commercial data centers. These research initiatives aim to discover novel materials with anomalous thermal properties or to devise mechanisms for moving heat that defy conventional limitations on fluid dynamics imposed by viscosity and pressure drops. The setup of academic insight with industrial capability accelerates the development of next-generation cooling technologies that are essential for supporting the computational demands of artificial general intelligence. Software will adapt to thermal-aware scheduling to dynamically allocate workloads based on real-time temperature maps derived from embedded sensors within the processor package, treating heat as a resource to be managed just like memory or processing cycles. Operating systems and hypervisors will incorporate thermal feedback loops that migrate processes away from hot zones within a server or across a cluster, ensuring that the thermal load is distributed evenly to prevent localized overheating that could trigger emergency shutdowns or permanent damage.

Regulatory frameworks lag behind technology regarding fire codes and environmental regulations for coolant disposal, creating legal uncertainties that complicate the deployment of novel cooling fluids in certain jurisdictions where existing statutes do not account for their unique chemical properties or volumes involved. The development of software-defined thermal management is a critical layer of abstraction that allows the system to autonomously regulate its temperature, fine-tuning performance while remaining within safe operational limits defined by hardware physics. Electrical infrastructure must support power densities per rack exceeding 100 kW to accommodate future superintelligent systems, requiring a complete overhaul of the power distribution networks within data centers, including busways, transformers, and uninterruptible power supplies. Upgrades to busways, transformers, and uninterruptible power supplies are necessary to handle these increased loads without introducing significant electrical losses or safety hazards associated with high current flows through legacy cabling designed for lower densities. The delivery of such high power to a single rack necessitates the use of higher voltages and thicker cabling, as well as advanced connectors capable of maintaining reliable contact under thermal cycling conditions without overheating or degrading over time. This transformation of the power infrastructure is a prerequisite for the deployment of superintelligent hardware, as existing facilities lack the capacity to deliver the energy required to drive these systems while simultaneously removing the waste heat they generate.

Cooling system failures can cascade into computational errors in superintelligent systems, as improved temperatures alter the electrical characteristics of transistors and interconnects, leading to timing violations and logic errors that compromise the integrity of calculations being performed. Fault-tolerant thermal control loops will be essential to maintain system stability during cooling anomalies, employing redundant pumps, sensors, and logic paths to ensure that a failure in one part of the cooling system does not result in a catastrophic overheating event that destroys expensive hardware or corrupts critical data models. The design of these control systems must account for the thermal inertia of the hardware, implementing predictive algorithms that anticipate temperature rises before they reach critical levels based on workload intensity trends rather than reacting solely to current temperature readings. Ensuring reliability in thermal management is crucial, as the cost of downtime or hardware damage in superintelligent systems is exponentially higher than in conventional computing environments due to the complexity and value of the computations being performed. Economic displacement may occur in traditional HVAC sectors as liquid and immersion cooling dominate new data center builds, shifting demand away from large-scale air handling units toward specialized fluid circulation systems and heat rejection infrastructure such as dry coolers or adiabatic towers. New business models will arise around coolant-as-a-service and thermal monitoring platforms, offering managed solutions for the complex task of maintaining optimal thermal conditions in high-density computing environments without requiring end-users to develop specialized chemical handling expertise in-house.

Key performance indicators must expand beyond PUE to include Thermal Resistance and Cooling Energy Ratio, providing a more comprehensive view of the efficiency and effectiveness of the thermal management infrastructure relative to the computational output achieved per watt of energy consumed for cooling purposes alone. This economic evolution reflects a broader trend toward specialization in the data center industry. Future innovations may integrate thermoelectric coolers or photonic heat sinks for advanced thermal management, utilizing solid-state devices or light-based heat transport to move energy away from sensitive components without relying on bulk fluid flow, which introduces mechanical complexity and potential leak points. On-chip micro-refrigeration using MEMS-based compressors will provide localized cooling for high-power transistors, targeting hotspots with pinpoint accuracy to maintain uniform temperature across the die surface despite highly non-uniform power distributions typical of neural network accelerator architectures. Convergence with quantum computing is likely as both fields require ultra-low temperatures and precise thermal control, sharing common challenges in cryogenics and isolation from environmental thermal noise that can disrupt sensitive quantum states or degrade classical signal integrity at nanometer scales. These advanced technologies represent the cutting edge of thermal engineering.

Scaling physics limits include the Fourier limit of heat conduction and the critical heat flux in boiling systems, representing core boundaries beyond which heat transfer becomes inefficient or impossible regardless of the engineering effort applied or materials used. Cooling fails catastrophically beyond these physical limits without careful engineering, leading to phenomena such as film boiling, which insulates the heat source with a layer of gas and causes rapid temperature escalation, resulting in immediate device destruction through thermal runaway. Workarounds will involve heterogeneous connection to separate hot and cold functions across dies, physically isolating high-power processing elements from temperature-sensitive memory or analog circuitry to manage the overall thermal profile of the system by spatially segregating components based on their thermodynamic properties rather than functional adjacency alone. Understanding and operating within these physical constraints is essential for the design of reliable superintelligent hardware. Lively voltage and frequency scaling will help manage thermal loads during peak computation, allowing the system to temporarily reduce power consumption when temperatures approach critical thresholds by sacrificing marginal performance gains for sustained stability over longer durations. Algorithmic sparsity will reduce heat generation by minimizing active operations per cycle, taking advantage of the fact that zero values or irrelevant connections do not require energy expenditure and therefore do not contribute to the thermal load associated with charging and discharging capacitive interconnects within logic gates.

Thermal management acts as a foundational layer in the architecture of superintelligent hardware, influencing everything from the physical layout of the chip to the algorithms that run upon it by dictating what operations are physically possible within a given energy budget. Calibration for superintelligence requires treating temperature as a first-class design variable. Temperature will be co-improved with logic, memory, and interconnect design in future systems, breaking down the traditional silos between different engineering disciplines to create a unified design methodology that addresses thermal and electrical performance simultaneously rather than sequentially as an afterthought. Superintelligent systems will actively modulate their own thermal output through adaptive computation, adjusting their processing intensity based on real-time feedback from their thermal environment to maintain optimal operating conditions without human intervention or external control systems acting as intermediaries. These systems will prioritize energy-efficient pathways when cooling capacity reaches critical constraints, ensuring that computation continues even under suboptimal thermal conditions by selecting algorithms or hardware configurations that generate less heat per unit of useful work performed. This self-regulating capability is a maturation of computing technology where the machine manages its physical state as autonomously as it manages its logical tasks.