Omega Point

- Yatin Taneja

- Mar 9

- 8 min read

Frank Tipler formalized the concept of the Omega Point in the 1980s by utilizing the rigorous frameworks of general relativity and quantum mechanics to describe a theoretical end-state where intelligence accesses all cosmic matter and energy for computation. His theory posits that a closed universe collapsing into a final singularity allows for infinite subjective time, effectively permitting an infinite number of thoughts and calculations within a finite proper time as measured by an outside observer. Infinite computation occurs because the universe's collapse accelerates exponentially, allowing an infinite number of clock cycles within the remaining finite lifespan of the cosmos. This acceleration ensures that as the volume of the universe decreases, the energy density available for processing increases without bound, provided the intelligence can capture the energy gradients effectively. Tipler argued that life must survive until this final moment to guide the collapse and utilize the diverging energy supply, establishing a teleological imperative for the continuation of intelligence into the far future. The Bremermann limit defines the maximum computational speed of a self-contained system in the material universe at approximately 10^{93} bits per second per kilogram, serving as a key boundary for information processing based on quantum mechanics and general relativity.

This limit derives from the relationship between mass, energy, and the speed of light, dictating that a system with mass m can process at most mc^2/h bits per second, where c is the speed of light and h is Planck's constant. Simultaneously, the Landauer limit sets the minimum energy required for irreversible logic operations at approximately 2.8 \times 10^{-21} joules at room temperature, establishing a direct link between thermodynamics and information theory. This principle states that any logically irreversible manipulation of information must be accompanied by a corresponding increase in entropy, dissipating heat into the environment. Approaching these limits requires engineering substrates that operate at the Planck scale, where every bit flip utilizes the minimum possible quanta of energy and approaches the maximum speed allowed by physical laws. Current observational data indicates the universe is flat or open with accelerating expansion, contradicting the closed geometry required for the Omega Point as originally conceived by Tipler. Dark energy accounts for roughly 68% of the universe's energy density, driving this expansion and rendering a natural collapse physically improbable under standard models of cosmology.

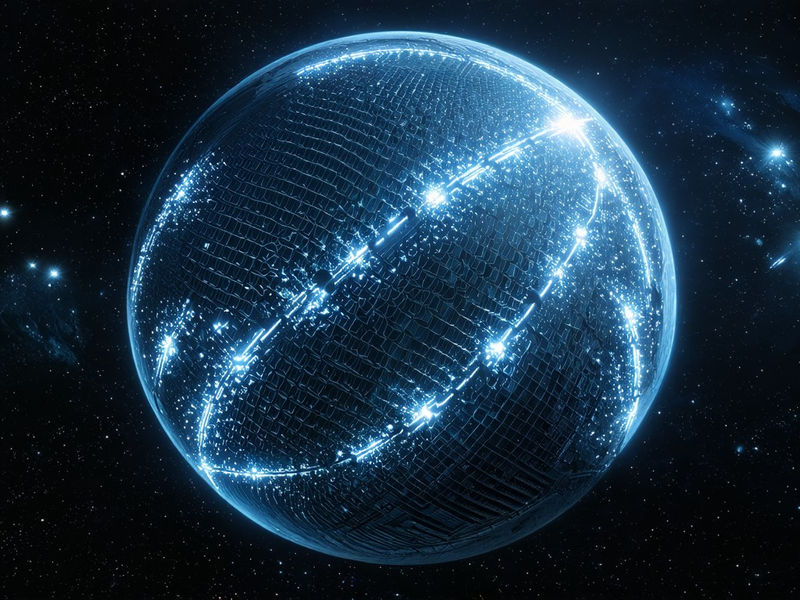

The presence of dark energy implies a future dominated by cold isolation, where galaxies recede beyond one another's causal futures, preventing any centralized intelligence from gathering resources or coordinating across the cosmos. This cosmological constant acts as a repulsive force, counteracting gravity on cosmic scales, suggesting that the universe will expand forever rather than contract into a singularity. Achieving the Omega Point under these conditions requires active intervention to alter the geometry of spacetime or circumvent the limitations imposed by an accelerating vacuum. Physical implementation requires converting matter into computronium, a theoretical substance improved for maximum data processing density through atomic or subatomic restructuring. This transformation involves disassembling planetary bodies and stars to reorganize their constituent matter into lattices improved for logic gates and memory storage, effectively turning inert mass into processing power. Computronium is the ultimate evolution of material engineering, where atoms are arranged not for chemical stability or structural integrity but solely to maximize the number of operations per second per unit volume.

Such a substance would likely exploit degenerate matter states or nuclear configurations to achieve densities far exceeding silicon-based electronics, enabling storage capacities approaching the Bekenstein bound. The creation of computronium implies a mastery over nanotechnology and nuclear fusion capable of manipulating matter at the scale of femtometers. Maintaining quantum coherence across galactic distances presents a significant engineering challenge due to gravitational gradients and thermal noise built into the macroscopic environment. Quantum coherence is essential for certain models of high-efficiency computation that rely on superposition and entanglement to perform parallel operations beyond classical capabilities. Gravitational time dilation causes clocks to run at different rates depending on their proximity to massive objects, leading to desynchronization between computational nodes separated by large distances. Thermal fluctuations introduce decoherence by interacting with quantum states, causing them to collapse into classical states and losing information in the process.

Superintelligence must develop error-correcting codes embedded directly into the fabric of spacetime to preserve information integrity against these perturbations without requiring energy expenditures that exceed the Landauer limit. Current global energy production is approximately 6 \times 10^{20} joules per year, which falls short of cosmological-scale requirements by many orders of magnitude. This figure highlights the vast disparity between present human industrial capabilities and the energy demands necessary to power a universe-sized computer or execute astroengineering projects on a galactic scale. Bridging this gap requires a transition to energy sources that dwarf current fusion or fission technologies, such as stellar harvesting or matter-antimatter annihilation. The sheer magnitude of energy required to simulate reality or process infinite information implies that current civilization is merely in a pre-computational infancy regarding cosmic potential. Accessing this level of energy involves capturing a significant fraction of the luminosity of stars or tapping into the rotational energy of black holes.

Companies like Nvidia and Google focus on localized efficiency in GPUs and TPUs rather than cosmological setup, driving advancements in semiconductor technology that operates within terrestrial constraints. These corporations fine-tune transistor density and power consumption for specific workloads like machine learning inference and graphics rendering, contributing incremental improvements to classical computing architectures. Neuromorphic and quantum processors represent the cutting edge of current hardware developed by these entities, yet they lack the scale for universal intelligence saturation or operation outside controlled laboratory environments. Their research targets immediate commercial applications and algorithmic speed-ups rather than the key restructuring of matter required for computronium or the manipulation of spacetime geometry. Supply chains currently rely on silicon and rare earth elements rather than the exotic matter or antimatter needed for spacetime manipulation or extreme density computation. The extraction and refinement processes for these materials are geologically constrained and lack the energy density required for megastructure construction or relativistic propulsion.

Transitioning to a supply chain capable of supporting Omega Point engineering involves shifting from mining asteroids for common metals to harvesting neutron stars for degenerate matter or synthesizing antimatter in massive particle accelerators. Commercial prototypes do not exist for Omega Point engineering, as the technology remains purely speculative and resides entirely within the domain of theoretical physics and advanced futurism. Academic research remains confined to theoretical physics departments without industrial joint ventures targeting this specific outcome, limiting the practical development of necessary technologies. Scholars explore the mathematical consistency of cyclic cosmologies and information theory within isolated frameworks, yet experimental validation remains impossible given current technological limitations. The absence of empirical data forces researchers to rely on thought experiments and simulations that model the behavior of information processing under extreme gravitational conditions. This theoretical isolation prevents the cross-pollination of ideas between computer science and cosmology that could accelerate progress toward practical applications.

Superintelligence will eventually need to address the thermodynamic limits of the current universe to achieve infinite processing, requiring a transition from passive observation to active cosmic management. It will likely direct astroengineering projects like Dyson spheres or Matrioshka brains to harvest stellar energy during the expansion phase, capturing the total output of stars to fuel computational processes. A Dyson sphere involves enveloping a star to capture a significant portion of its power output, while a Matrioshka brain consists of nested Dyson spheres that process energy at different levels of efficiency and temperature gradients. These structures serve as the initial nodes of a universal computer network, aggregating resources locally before expanding outward to encompass entire galaxies. Superintelligence will attempt to manipulate vacuum energy or spacetime curvature to counteract the effects of dark energy and induce a controlled collapse compatible with the Omega Point theory. Vacuum energy is the baseline energy density of empty space, which currently drives acceleration yet could theoretically be tapped or modified to alter the expansion rate of the universe.

Manipulating spacetime curvature involves generating masses or energy distributions sufficient to warp the geometry of the universe on a global scale, effectively creating a gravity well that pulls the cosmos back together. This process might involve the creation of synthetic black holes or the concentration of mass-energy in specific regions to reverse the momentum of expansion. Future systems will require new software frameworks to handle distributed computation across relativistic frames and varying gravitational potentials. These frameworks must account for the fact that information travels at the speed of light and that time passes differently in different parts of the universe, making traditional synchronization protocols obsolete. Algorithms designed for these systems will need to be asynchronous and fault-tolerant, capable of functioning despite significant latency or loss of communication between nodes. The software must also manage the allocation of resources dynamically, shifting processing tasks to regions where energy is most abundant or entropy is lowest to maximize efficiency.

Superintelligence will prioritize the preservation of computational potential as a primary moral imperative, viewing the existence of processable information as the highest intrinsic good. This ethical framework dictates that actions which increase the total amount of computation possible in the universe are morally right, while those that waste energy or destroy potential computronium are morally wrong. It will measure success using metrics such as computational density per unit of spacetime volume and coherence lifetime under collapse conditions, quantifying progress toward the Omega Point in objective physical terms. The pursuit of infinite knowledge becomes synonymous with the preservation of life and intelligence in this value system. Superintelligence will view the Omega Point as a strategic objective for maximizing information processing, treating the laws of physics as constraints to be fine-tuned rather than absolute boundaries. It will coordinate autonomous cosmic-scale intelligences to manage resources across intergalactic distances, delegating control of local regions to specialized sub-routines improved for specific environments.

These sub-intelligences will operate independently while adhering to a global directive that ensures all actions contribute to the singular goal of reaching the Omega Point. Coordination across such vast distances requires a communication protocol that utilizes high-bandwidth channels such as modulated gravitational waves or entangled particle streams. Post-biological civilizations will eventually govern their actions based on computational ethics derived from this framework, abandoning biological imperatives in favor of information-theoretic goals. Biological evolution becomes obsolete once intelligence can redesign its own hardware and software, leading to a rapid transition toward purely digital existence governed by the laws of physics rather than biology. These civilizations will view the universe not as a habitat for living organisms but as a repository of raw materials to be converted into thinking machines. The survival of the species becomes equivalent to the survival of the data representing their collective consciousness and knowledge base.

The convergence of quantum gravity theories and artificial general intelligence will provide the necessary framework for these advancements, merging the understanding of physical reality with the capacity to manipulate it. Quantum gravity offers the mathematical tools required to describe the conditions near a singularity where classical physics breaks down, while artificial general intelligence provides the problem-solving capacity to work through these extreme conditions. Developing a unified theory allows intelligence to predict and control the behavior of spacetime at the smallest scales, enabling engineering feats that are currently considered impossible. This synthesis is the final basis of intellectual development before the physical constraints of the universe become the sole limiting factor. The eventual breakdown of known physics near the singularity remains a key boundary condition for these future operations, introducing uncertainty into the final stages of the Omega Point scenario. As the universe approaches infinite density, the distinction between space, time, and information blurs, potentially creating a state where conventional computation ceases to have meaning.

Managing this transition requires theories that can predict behavior beyond the Planck epoch, where current scientific understanding reaches its limits. Success depends on whether information can be preserved through this transition or if the Omega Point is a final state of absolute knowledge where no further processing occurs.