top of page

Neural Networks

Transfer Learning: Leveraging Pretrained Representations

Transfer learning involves applying knowledge gained from solving one problem to a distinct related problem through the mechanism of weight reuse and representation sharing, enabling models to apply prior experience to accelerate learning in new contexts. Pretrained representations consist of learned feature encodings derived from large-scale datasets or tasks, capturing general patterns that prove useful across various domains such as vision, language, and audio processing b

Yatin Taneja

Mar 910 min read

Rapid Knowledge Acquisition: One-Shot Learning at Scale

Rapid knowledge acquisition refers to the capability of a computational system to master complex tasks or domains from extremely limited data, a core requirement for advancing artificial intelligence toward autonomous operation in agile environments. One-shot learning constitutes a specific methodology within this domain where a model generates accurate predictions after exposure to only a single example per class or task, effectively mimicking human-like learning efficiency.

Yatin Taneja

Mar 99 min read

Associative Memory Networks: Connecting Related Concepts

Associative memory networks function on the principle of content-addressable storage where data retrieval depends on the intrinsic properties of the data itself rather than on explicit memory addresses used in traditional von Neumann architectures. These systems construct dense overlapping representations within the network structure such that related concepts activate shared nodes or neurons, thereby allowing the system to generalize across similar inputs and recognize patte

Yatin Taneja

Mar 910 min read

Problem of Sample Efficiency: Few-Shot Learning in High-Dimensional Spaces

Sample efficiency defines the quantitative relationship between the volume of data required for a learning system to reach a specific performance threshold and the complexity of the underlying task it seeks to master. Few-shot learning specifically targets the optimization of this relationship to achieve high competency with minimal examples, typically ranging from one to five instances per class. High-dimensional spaces, which are everywhere in domains such as computer visio

Yatin Taneja

Mar 914 min read

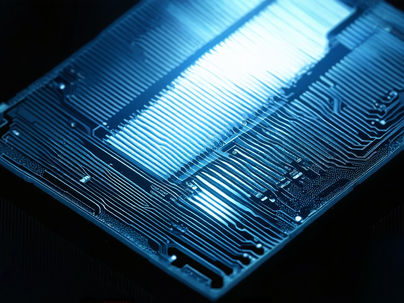

Photonic Neural Networks: Computing with Light

Photonic neural networks utilize photons instead of electrons to execute neural network computations, fundamentally changing the physical medium through which information flows during processing. This substitution enables high-speed processing with minimal energy consumption because photons, being massless bosons, do not experience resistive heating or capacitive charging delays in the same manner as electrons moving through metallic interconnects. The core concept involves r

Yatin Taneja

Mar 98 min read

Self-Supervised Learning

Self-supervised learning trains models using unlabeled data by generating supervisory signals directly from the input, a methodological shift that allows algorithms to learn from the vast quantities of raw information available in digital environments without requiring explicit human guidance for every data point. This approach relies on the principle that raw data contains latent structure useful for training, meaning that the intrinsic relationships within images, text, or

Yatin Taneja

Mar 911 min read

Deep Silence: Learning in Absence

Deep silence is a state of minimized external sensory input maintained for a defined duration to facilitate significant internal cognitive processing and structural mental reorganization. Sensory withdrawal involves the deliberate reduction of auditory, visual, and tactile stimuli to near-zero levels, forcing the cognitive apparatus to rely entirely on internal data streams rather than external references for operation. Ground hum describes the persistent, low-level cognitive

Yatin Taneja

Mar 913 min read

Photonic Neural Networks for High-Speed Reasoning

Photonic neural networks utilize photons instead of electrons to execute computations, specifically targeting the acceleration of linear algebra operations essential to deep learning. These systems employ integrated photonic circuits to guide and manipulate light through waveguides, phase shifters, and photodetectors to perform matrix operations at the speed of light within a solid-state medium. Optical interference allows parallel computation of weighted sums across multiple

Yatin Taneja

Mar 98 min read

Neural-Symbolic Integration

Neural-symbolic setup combines pattern recognition capabilities built into neural networks with the explicit logic provided by symbolic systems to create artificial intelligence that learns from data while adhering to logical rules. This hybrid approach aims to achieve strong reasoning and explainability in artificial intelligence systems by addressing the core limitations found in singular methodologies, which rely exclusively on either statistical correlation or rigid forma

Yatin Taneja

Mar 910 min read

Role of Sparse Autoencoders in Interpretability: Disentangling Latent Concepts

Sparse autoencoders function as overcomplete neural networks designed to reconstruct input activations while enforcing a constraint that limits the number of active neurons, operating on the principle that high-dimensional data generated by large neural networks contains a vast number of underlying features that are sparsely distributed across the activation space. The architecture consists of an encoder that maps inputs to a latent space and a decoder that attempts to recons

Yatin Taneja

Mar 99 min read

bottom of page